Picture a massive city’s water system: raw river water gets sucked in, filtered through treatment plants, pumped via pipes, and flows crystal-clear from your tap. One leak or pump failure, and chaos ensues. Swap water for data, and you’ve got a data pipeline the invisible backbone automating how raw info from apps, sensors, and servers transforms into actionable gold.

In our 2026 data explosion (trillions of IoT pings daily), pipelines prevent “data drownings.” They’re not scripts; they’re resilient systems for ingestion, processing, and delivery. Let’s dissect them layer by layer, with real mechanics and stakes.

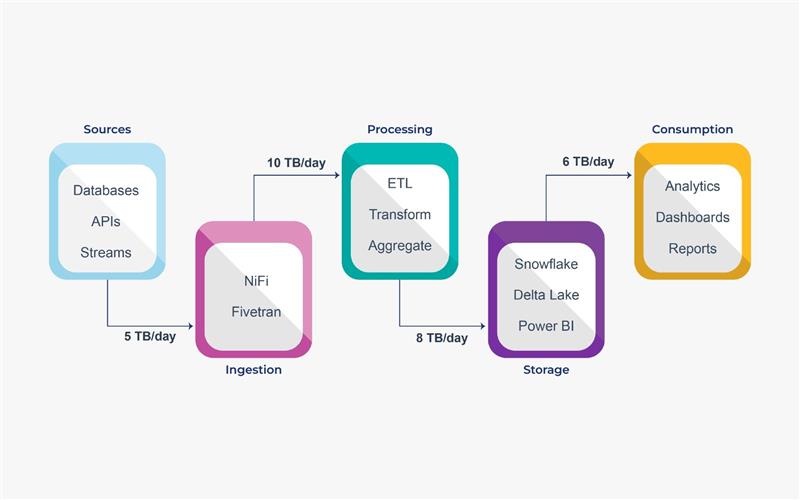

Core Components: Breaking Down the Pipeline Blueprint

A data pipeline is a sequence of interconnected stages, orchestrated like a symphony. Miss a note, and the music sours.

- Sources: Where data lives relational DBs (PostgreSQL), NoSQL (MongoDB), files (CSV/JSON in S3), streams (Kafka for live tweets), or APIs (Stripe payments).

- 000Ingestion Layer: Pulls/pushes data reliably. Batch (hourly files) vs. streaming (sub-second events). Tools: Apache NiFi for drag-and-drop, Fivetran for managed connectors.

- Processing/ETL (Extract-Transform-Load): The brains. Extract raw bits, transform (clean nulls, normalize currencies, join datasets, apply ML scoring), Load to destinations. ELT flips it load first, transform in-warehouse for speed.

- Orchestration: The conductor. Schedules jobs, dependencies (e.g., “clean sales before aggregating”), retries fails. Airflow DAGs (Directed Acyclic Graphs) visualize this.

- Storage/Destinations: Warehouses (Snowflake for SQL queries), lakes (Delta Lake for raw + refined), or apps (Power BI dashboards).

- Monitoring/Observability: Logs metrics (latency, error rates), alerts (Slack pings for 5% drop-offs).

Pipes classify as batch (e.g., daily CRM syncs cost-effective, simpler), streaming (e.g., Uber ride pricing low-latency, complex), or lambda (both, for flexibility).

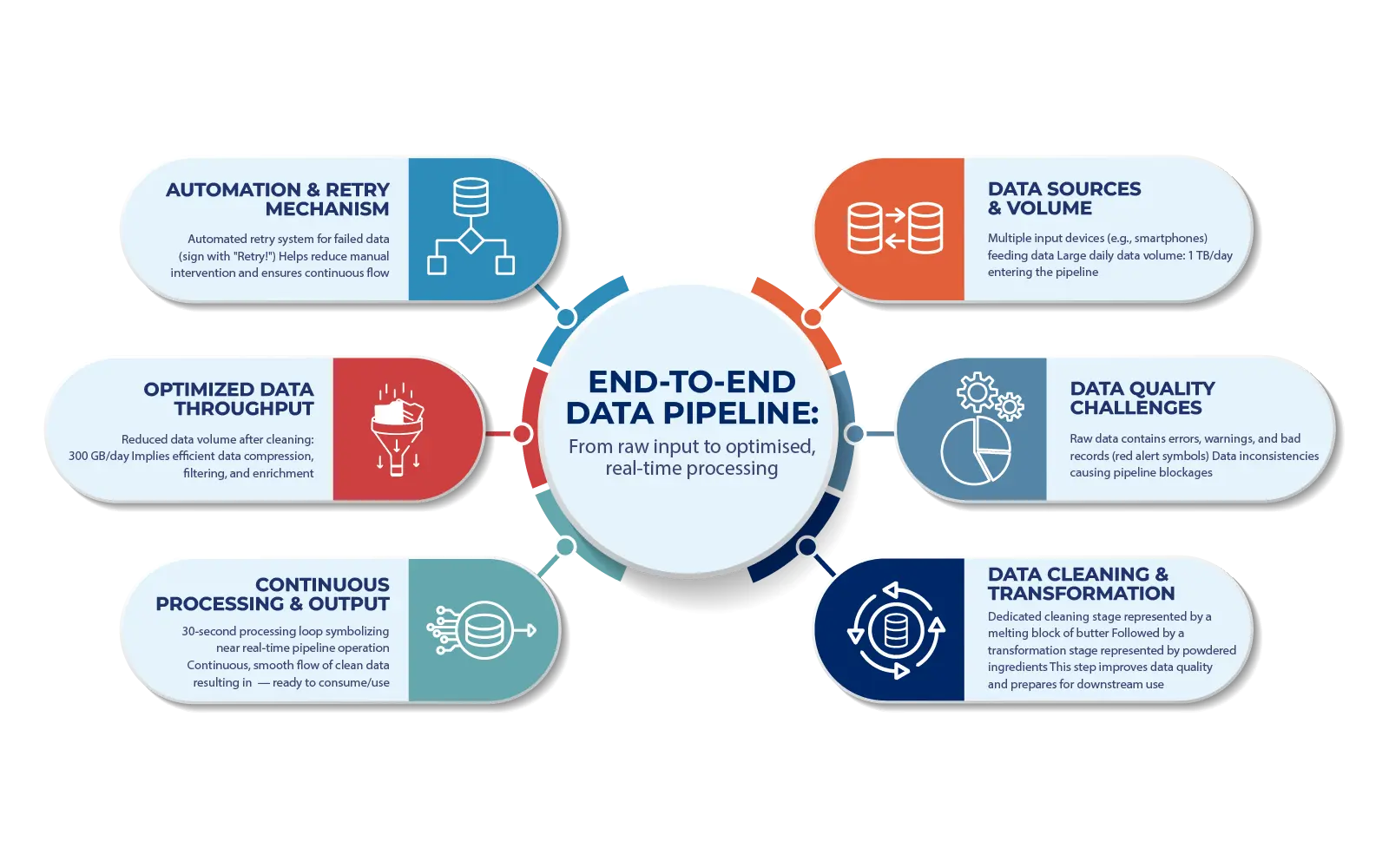

How It All Flows: Step-by-Step Mechanics with Real Examples

Let’s trace a pipeline end-to-end, using an e-commerce giant like Shopify analyzing Black Friday.

- Ingestion Fires Up: 10M orders stream via Kafka topics. Change Data Capture (CDC) tools like Debezium snag DB updates in real-time.

- Validation & Cleansing: Dropped invalid ZIPs, dedupe repeats, enrich with geo-IP.

- Transformation Deep Dive: Aggregate: daily revenue per product. Join with inventory. ML: predict churn via logistic regression \( P(y = 1) = \frac{1}{1 + e^{-(\beta_0 + \beta_1 x)}} \)

. dbt model’s version-control this like Git for SQL. - Orchestration Ensures Order: Airflow: Task A (clean) → B (aggregate) → C (load). If B fails, retry 3x or rollback.

- Delivery & Serving: BigQuery warehouse queries in seconds. Data flows to Looker dashboards or recommendation APIs.

Batch Example: Weekly HR reports slow but cheap.

Streaming Pitfall: Backpressure (overloaded queues) fix with Kafka partitions.

Common Pitfalls and How to Bulletproof Your Pipeline

Explanatory power demands honesty: 70% of pipelines fail in production. Why?

- Data Quality Traps: Garbage in, garbage out. Solution: Great Expectations for schema tests.

- Scalability Snafus: Spike crashes naive pipes. Kubernetes auto-scales pods.

- Cost Creeps: Streaming guzzles compute. Optimize: Iceberg for efficient queries.

- Debug Nightmares: Black box fails. Add tracing.

Pro Tip: Schema evolution handle evolving data (add fields without breaks) via Avro/Protobuf.

Why Pipelines Are Mission-Critical: Business Impact Unpacked

No pipeline? Siloed data leads to stale BI, biased AI, audit fails.

Unlocks:

- Velocity: Uber’s pipes enable 1-sec ETAs.

- Democratization: Non-engineers query via Hex or Streamlit.

- AI Readiness: Clean flows for LLMs (e.g., fine-tune on pipeline-fed customer data).

- ROI: 5-10x faster insights; McKinsey says pipelines boost revenue 20%.

In 2026, with edge AI and zero-ETL (direct queries), pipelines evolve to “data meshes decentralized domains owning flows.

Hands-On: Build a Simple Pipeline in 30 Minutes

- Install Dagster (modern Airflow alt).

- Source: CSV sales file.

- Transform: Pandas → aggregate revenue.

- Load: PostgreSQL.

- Run: dagster dev – monitors auto-magically.

Scale to cloud: GCP Dataflow for serverless.

Data pipelines: the plumbing of progress. Build one and watch your data empire rise.

What specific pipeline (e.g., streaming for IoT) do you want a custom blueprint for?

FAQ: Data Pipelines Demystified

Got questions? Here’s the scoop on top searches.

ETL transforms before loading — good for smaller data and strict schemas.

ELT loads raw data first, then transforms in the warehouse — better for big data and flexibility.

Streaming is continuous real-time ELT-style processing. Modern warehouses favor ELT.

Batch runs on schedules and is simpler and cheaper.

Streaming handles real-time data but is more complex and expensive. Start with batch first.

Airflow, DuckDB, Pandas/dbt, MinIO, and BigQuery sandbox are great free options.

$100–$10,000 per month depending on scale. Batch is cheaper; streaming costs more. Spot instances can reduce costs significantly.

Check logs and metrics. Common problems include schema drift and resource limits. Restart safely to avoid duplicates.

Use feature stores after pipelines. Pipelines move data; feature stores serve ML-ready features.

Open-source is flexible but requires more maintenance. Managed tools are easier but more expensive. A hybrid approach works well.

Zero-ETL systems, AI-driven pipeline optimization, and multimodal data processing are key trends.

Namratha L Shenoy | Data Engineer

Tired of broken scrapers and messy data?

Let us handle the complexity while you focus on insights.